Wie generative KI das Robotertraining mit realistischen virtuellen Welten revolutioniert

Chatbots, like ChatGPT and Claude, have woven themselves into the fabric of our digital lives due to their incredible versatility, capable of tasks ranging from code debugging to crafting poetry. Their finesse is owed to the vast amounts of text data gathered from the internet on which they are trained. However, training robots that operate in physical environments requires a lot more than just text data. These robotic entities thrive on visual and physical contexts, enabling them to interact seamlessly with their environments—whether it be placing a coffee cup on the table or stacking dishes without causing a clatter. Learning these operations is no small feat—it requires demonstrations akin to how-to guides for each task. The hitch? Gathering these real-world demonstrations is not only laborious but can also be inconsistent and expensive.

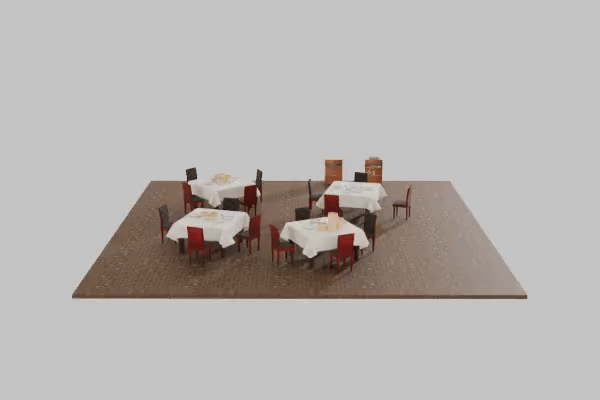

This is where the groundbreaking work of the MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) and the Toyota Research Institute steps in. These researchers developed a paradigm-shifting method dubbed steerable scene generation: a way to create virtual 3D environments—say, kitchens or restaurants—capable of simulating a multitude of robotic tasks. This method is built on a diffusion model, a subdomain of AI that starts with random noise and contours it gradually into a structured image. The model adheres to the laws of physics, producing believable scenes and objects. For instance, it ensures that a fork won’t eerily float through a soup bowl, adding a touch of realism.

The standout feature in this method is the integration of the Monte Carlo Tree Search (MCTS)—a strategy inspired by AI gaming systems like AlphaGo. MCTS provides a lens for the model to explore multiple potential ways of constructing a scene, opting for the most realistic or valuable version based on the goal at hand. Whether it’s maximizing the diversity of edibles stashed in a kitchen or something else—MCTS is up for the task. Nicholas Pfaff, a PhD student at MIT EECS spearheading the project, further explains by stating that this is the first time MCTS has been applied to scene generation where it is framed as a sequential decision-making process, allowing for the creation of complex scenes beyond the initial training set.

Another notable feature is the model’s learning approach. It employs reinforcement learning where it gets a “reward” for concocting scenes that address specific commandments. With time, the model learns to fabricate environments closely resembling the desired outcomes. Users can guide the system using bespoke visual prompts, like “create a kitchen setup with four apples and a bowl placed on the table.” The results are nothing short of impressive as this model outperforms its competitors in tasks by at least 10% margin. But that’s not all—the model can also modify existing scenes upon command. It can shuffle objects around or toss in new ones, all while maintaining the integrity of the environment. It’s like having your personal virtual set manager who comes with an understanding of aesthetics and physics.

The true mettle of this system is in its power to generate invaluable training data for roboticists. The virtual environments become a training course where robots learn tasks such as arranging cutlery or allocating food on plates. The lifelike simulations create an ideal sandbox for training robots for real-world tasks. Future iterations of this system aim to include interactive elements like cabinets or jars that can be recovered by robots, adding another layer of realism. Nicholas Pfaff also comments on the fact that pre-training scenes might deviate from actual ones. “Using our steering methods, we can move beyond that broad distribution and sample from a ‘better’ one.”

Looking further, the team aspires to incorporate real-world images into training data, using a technique known as Scalable Real2Sim. This would allow the system to construct environments closer to the ones robots will encounter in reality. The industry experts are quite sanguine about this development. Jeremy Binagia, an applied scientist at Amazon Robotics, voiced that steerable scene generation ensures physical feasibility and full 3D translation, hence spawning much more engrossing scenes. This research was backed by Amazon and the Toyota Research Institute and shared at the Conference on Robot Learning. You can deep dive into more details in the Originalartikel on MIT News.