Exploring the Future of Generative AI Through Wearable Sensor Data

Diving Deep Into Generative AI and Its Impact on Digital Health

Picture a world where artificial intelligence (AI) systems go beyond just mimicking human creativity—this is the world of generative AI. A space that’s quickly transformed itself into a critical cornerstone of modern technology, generative AI stands at the center of a revolution. It encompasses systems that can ingeniously generate fresh content like text, images, music, and even code. But it’s more than a mere repackaging of information. Instead, these models capitalize on patterns learned from existing data, leading to new, often surprisingly ingenious outputs.

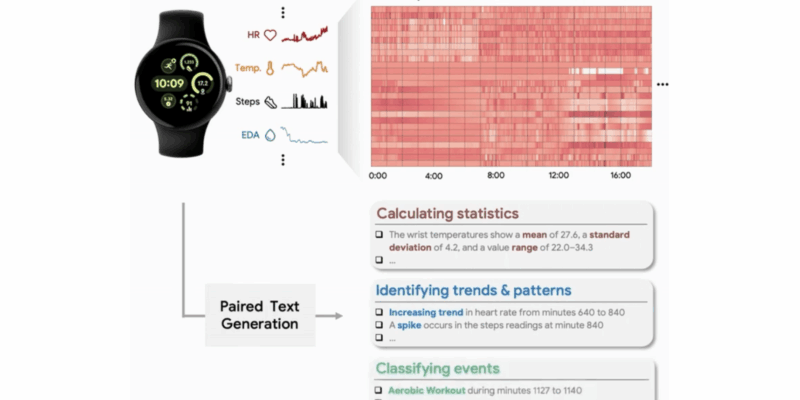

Although for many, generative AI brings to mind applications such as ChatGPT or image generators like DALL·E, the domain is expanding. Researchers are now examining the uncharted territory where generative AI intersects with wearable sensor data. And Google has positioned itself right at the front with the unveiling of the project known as SensorLM.

SensorLM represents a pioneering attempt to instruct AI in comprehending the unique “language” of wearable sensors. It takes its cues from large language models, benefiting from a vast amount of time-series data from wearable devices like accelerometers and gyroscopes. The goal? To be able to interpret human activity and physiological signals with unprecedented precision.

The Promising Future of SensorLMTM

One cannot underestimate the potential impact of SensorLM. With wearable gadgets such as fitness trackers, smartwatches, and advanced medical monitors being practically omnipresent, they generate a continuous stream of rich data that’s often underutilized. The application of generative AI models to this data heralds a new era in health monitoring, anomaly detection, and even future condition prediction.

But introducing AI to sensor data isn’t without its challenges. Sensor data is characteristically noisy and can vary drastically across different users and devices. To teach models to interpret this type of data, we need not only massive amounts of training data, but also unique approaches to model architecture and learning strategies. That’s where SensorLM shines, building on techniques like masked modeling and pretraining on large data sets. The model painstakingly learns to predict absent parts of the sensor data, cementing its grasp over the underlying structure and patterns.

Let’s take a moment to picture the world reshaped by this research. Imagine your smartwatch identifying your unique movement patterns and notifying you about early signs of fatigue or illness. Imagine a physical therapy program crafted around your individual needs, offering real-time feedback and custom exercises backed by wearable sensors and generative AI. These might sound like far-off dreams, but with projects such as SensorLM, they could be our near future.

A Glimpse Into the Future

The landscape of generative AI is evolving beyond the digital confines of words and images. By stepping into the realm of physical data, projects like SensorLM are unlocking new dimensions of human understanding and artificial clarity. As this technology ripens, we can anticipate a future filled with more intuitive, adaptive, and personalized systems that have a deep understanding of the human experience.

Ready to know more? To grasp the entirety of SensorLM and its groundbreaking approach to wearable sensor data, check out the original piece on Google Research: SensorLM: Learning the Language of Wearable Sensors.