Teaching AI to Recognize Your Pet: New MIT Method Trains Models to Spot Personalized Objects

Ponder this for a second: your adorable French Bulldog, Bowser, is at the local dog park. Amidst the blur of canines capering about, your eyes easily distinguish Bowser. But what if you wished for an AI to do the same while you’re holed up in the office? It’s at this point things become complex.

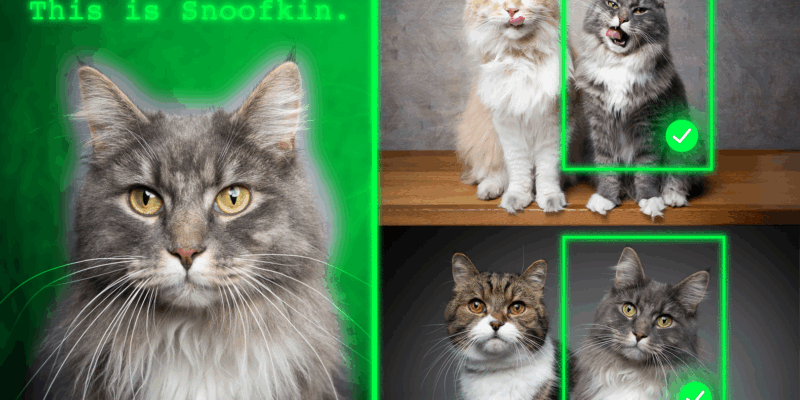

Our present vision-language models (VLMs), like the popular GPT-5, are excellent at singling out general objects. For instance, identifying a ‘dog’ or a ‘tree’ is a breeze. But, the challenge arises when these models are tasked with pinpointing a specific, personalized object. If you expect an AI to recognize Bowser the Frenchie in a line-up of French Bulldogs, it would probably fumble. This is an impediment to anyone intending to utilize AI for tasks such as pet monitoring, object tracking, or assistive technology.

The Pursuit of Personalization

To bridge this gap, researchers from MIT and the MIT-IBM Watson AI Lab conceived a new training method that enables AI models to recognize personalized objects more effectively across diverse scenes. They worked on re-training VLMs using specially curated video-tracking data, which follows the same object across a series of frames. This method essentially coerces the model to depend on contextual clues over memorized information. The AI model is fed a handful of sample images of a specific object, for instance, a pet or a backpack. The revamped system then becomes far superior at identifying that object in novel images, while retaining the model’s broader capabilities.

Bringing It to Life

This progress could be a game-changer across various spheres. From AI systems tracking specific animals for environmental studies to assistive technologies aiding visually impaired users in locating personal belongings in their homes, the possibilities are numerous. This technique could also amplify robotics and augmented reality tools necessitating swift and accurate identification of specific objects in evolving surroundings.

The project is steered by Jehanzeb Mirza, an MIT postdoc and senior author of the research paper. Along with Mirza, a team of researchers from MIT, the Weizmann Institute of Science, and IBM have also played crucial roles in the project. Their findings will be showcased at the upcoming International Conference on Computer Vision.

To Mimic the Human Mind

According to Mirza, the ultimate goal is for these models “to learn from context, just like humans do”. If an AI model can achieve this, then, rather than retraining it for each new task, the model could be fed a few examples and it would infer how to perform the task from that context. This, in his opinion, would be an unrivaled ability. However, this vision isn’t without its own set of challenges. The research community is yet to find a definitive answer to the question of why VLMs struggle where humans don’t. The problem could lie in the integration of the visual and language components, where some visual information might get lost, but the conclusion isn’t clear cut yet.

The team’s work has resulted in impressive strides. With their newly curated dataset, they observed an average improvement of 12% in personalized object localization. Moreover, when pseudo-names were used instead of actual object names, performance skyrocketed by up to 21%. Additionally, the larger the model, the more substantial the gains. As they move ahead, the team plans to delve deeper into the learning inconsistencies of VLMs and LLMs, and investigate fresh strategies to enhance VLM performance without necessitating constant re-training of the models.

Realizing the huge potential for quick, instance-specific grounding in practical workflows, Mirza and his team believe their data-centric approach can aid the widespread integration of vision-language foundation models. Joining Mirza on this ground-breaking work were Wei Lin, Eli Schwartz, Hilde Kuehne, Raja Giryes, Rogerio Feris, Leonid Karlinsky, Assaf Arbelle, and Shimon Ullman, with funding coming from the MIT-IBM Watson AI Lab.

For more intricate details, check out the original article here.